The Singularity Was a Procurement Decision

How institutions buy the future they later claim they didn’t choose.

Somewhere in Canberra, artificial intelligence becomes real in a room with bad coffee.

Not in the room where a frontier lab announces a new model. Not on the stage where a founder shows software planning a holiday, writing code, reading contracts, booking meetings and smiling politely while it does the work of three junior staff. The more important room is duller than that. Someone has to approve the cloud spend. Someone has to decide whether the licence includes the new AI layer. Someone has to ask where the data sits, who can audit the logs, whether the price rise is capped, whether the department can leave in five years without tearing half its nervous system out of the wall.

That is my claim. The AI frontier most people meet in 2026 will be shaped less by a sudden leap in machine mind than by ordinary procurement choices. Which agreements close. Which vendors get inside the workflow. Which clauses define “human oversight.” Which data centres get fast-tracked. Which departments decide that a pilot has become infrastructure because everyone has already started using it.

The Singularity, if it arrives in ordinary institutions, will arrive on a purchase order.

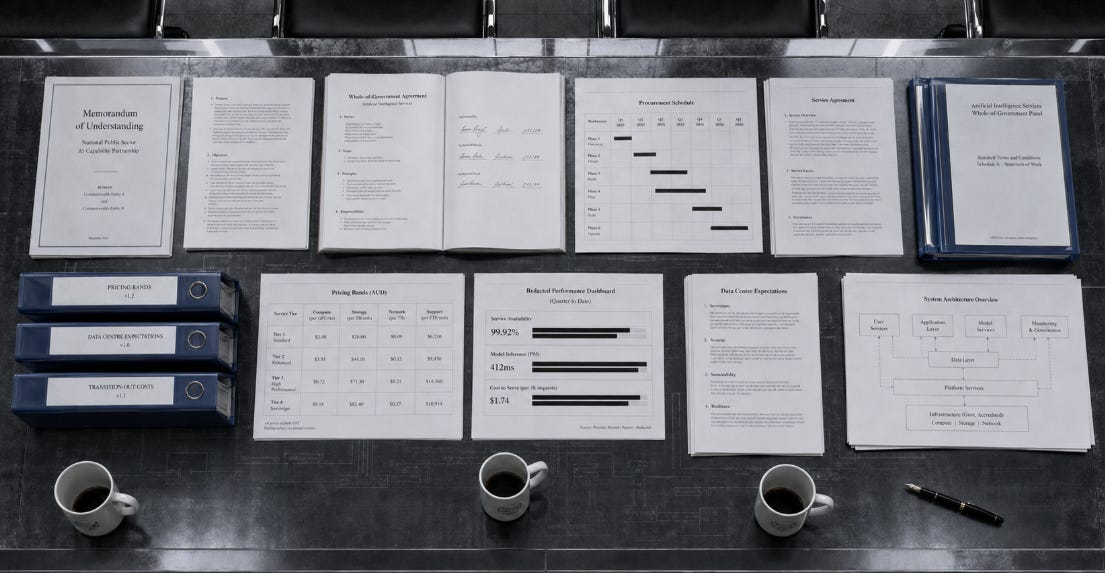

This sounds less interesting than the usual story. The usual story gives us a lab, a threshold, a machine waking up. It gives us a clean before and after. Procurement gives us meeting papers, panels, service schedules, pricing bands, memoranda of understanding, off-ramps nobody expects to use, and a contract manager who has three other files open. But that is how institutions change. They rarely announce that a new world has begun. They buy the tools that make the old world impractical.

Australia is a good place to watch this happen. Not because Australia is the centre of artificial intelligence, but because the machinery is visible enough to read. In April 2026 Microsoft announced a A$25 billion commitment to Australia, about US$18 billion, to expand AI and cloud infrastructure, cyber defence cooperation and workforce skilling by the end of 2029. The announcement was underpinned by a memorandum of understanding with the Australian Government and tied to the government’s expectations for data centres and AI infrastructure developers.

The surface story is investment. The deeper story is permission. A hyperscaler does not spend that kind of money because it has fallen in love with the Southern Hemisphere. It spends because the future market is becoming legible: government workloads, university workloads, health workloads, public-sector AI, private-sector AI, secure cloud, sovereign-ish arrangements, skills programs, cyber partnerships and the data-centre approvals needed to hold the whole thing up. The investment is presented as technology. It is also a bet on the shape of Australian bureaucracy.

A month earlier the Australian Government had published expectations for data centres and AI infrastructure developers. These expectations cover the national interest, energy, water, jobs, skills, research, innovation and local capability. The government says proposals that align with them will be prioritised, while energy-intensive proposals that do not align will not be prioritised in Commonwealth regulatory assessments. Large compute providers are expected to support access for Australian startups, researchers and not-for-profits on favourable terms.

That is not a side issue. It is the material politics of intelligence. The future model needs electricity before it needs philosophy. It needs land, cooling, grid connections, planning approvals, local tolerance and a story about national benefit. When governments decide which AI infrastructure gets priority, they are deciding which forms of machine intelligence become cheap, local, secure and normal.

The United States made the same point more bluntly in March 2026, when Amazon, Google, Meta, Microsoft, OpenAI, Oracle and xAI signed the Ratepayer Protection Pledge. The pledge says these companies should build, bring or buy the power needed for data centres, pay for required grid upgrades, and use separate rate structures so households are not left carrying the cost of AI infrastructure. It is voluntary, which matters, but the political message is still clear: AI is now large enough that someone has to say who pays for the power.

That is a healthier question than “is the machine conscious?” It is also less flattering to everyone involved. “Can AI think?” lets the debate drift toward metaphysics, personality and awe. “Who pays for the substation?” brings the matter back to earth. The model may be trained in a data centre, but the society around the model is trained through bills, approvals and contracts.

This is why the word “procurement” deserves more respect than it gets. Procurement is treated as clerical. It is where the exciting policy goes after the minister has spoken and before the invoices arrive. But procurement is the place where public values either acquire teeth or lose them. A government can say it wants responsible AI, sovereign capability, worker augmentation, privacy, transparency and local benefit. The contract decides which of those words survives contact with price, convenience and vendor power.

Look at the Australian Digital Transformation Agency’s new Microsoft Volume Sourcing Agreement, VSA6. It begins on 1 July 2026 and runs for five years. The agency describes it as providing stable pricing, improved discounts, capped price increases, better governance and reporting, stronger security and liability protections, and support for emerging technologies including AI. On paper, this is a software agreement. In practice, it is one of the rails on which the Australian Public Service will run.

The important phrase is not “Microsoft.” It is “whole of government.” When a central agency negotiates software, cloud, identity, productivity tools and emerging AI capability for government use, it is selecting a working environment. The agreement decides the defaults public servants will inhabit when they write advice, summarise records, search documents, manage correspondence, collaborate across agencies, prepare ministerial briefs and increasingly ask machine systems to help do those things.

Defaults are not neutral. A model does not need to be the best model in the world to become the model that shapes public work. It needs to be the model that is already inside the approved environment, already covered by the agreement, already familiar to staff, already blessed by security, already bundled into the subscription, already cheaper to extend than to contest. By the time a rival system is technically better, the institutional question may no longer be “which AI should we use?” It may be “why would we go through procurement again?”

The government’s own review of whole-of-government Single Seller Arrangements saw part of this. These arrangements delivered at least A$1.6 billion in discounts between 2019 and 2024, which is not trivial. They also sit on top of deeper technology choices already made by agencies. The review notes that by the time a seller qualifies for such an arrangement, technology reliance is likely already present. That sentence should make every parliament uncomfortable. Dependence can exist before the procurement system formally recognises it.

This is the procurement version of gravity. You do not choose the planet after you are already falling. You rationalise the fall, negotiate the best possible landing, and call the savings value for money.

I am not sneering at the officials who do this work. Most of them are trying to get secure tools at a defensible price under rules that require fairness, probity and value. They know the vendor has leverage. They know agencies need working systems. They know ministers want visible progress and that public servants will route around bad tools if the official tools are unusable. The spreadsheet is not a conspiracy. It is more troubling than that. It is normal administration under pressure.

The Commonwealth Procurement Rules already contain a better theory of AI governance than many AI manifestos. They say value for money is the core rule, but price is not the only factor. Officials must consider quality, fitness for purpose, supplier conduct, flexibility, environmental sustainability and whole-of-life costs. Those whole-of-life costs include transition-out costs, licensing, later features, maintenance, decommissioning and disposal.

Read that phrase slowly: whole-of-life costs. For ordinary software, it means the cost of buying, running, maintaining and leaving the system. For AI, it has to mean more. It should include the cost of institutional dependence. The cost of staff no longer practising the work the system now performs. The cost of records that cannot be understood without the tool that helped produce them. The cost of a public servant learning to supervise outputs without ever having done the slow task that would have taught her what good looks like. The cost of a department discovering, three years in, that the exit clause exists legally but not practically.

A bad AI contract will not necessarily look bad at the start. It may look efficient, secure and modern. It may come with discounts, training modules, dashboards, a governance board, an account team and a pilot program that everyone agrees is low risk. The danger is not the first use. The danger is the quiet migration from assistance to assumption.

At first the AI drafts the summary. Then the AI summary becomes what everyone reads. At first it helps prepare the brief. Then the brief is shaped around what the system can retrieve and rank. At first a human checks the recommendation. Then the human checks only exceptions because the queue is too long. At first staff are told the system saves time. Then the saved time is removed from the staffing model. At first the tool supports judgement. Later, judgement is what happens when the tool is unavailable.

This is the agentic frontier as institutions will actually meet it. The public imagination still pictures agents as little digital people with plans. Bureaucracies will meet them as workflow components. They will draft, classify, route, escalate, compare, recommend, monitor and prepare the record of what happened. The agents most people encounter will look less like rogue geniuses than compliant junior staff who never sleep, never join the union, never admit uncertainty unless prompted correctly, and produce enough documentation to make management feel safe.

That last part matters. Bureaucracies will shape the agents as much as agents shape bureaucracies. Public-sector buyers will not ask only for raw intelligence. They will ask for logs, access controls, data boundaries, audit trails, procurement compliance, security accreditation, retention settings, disability adjustments, local hosting, service credits, privacy language, training, dashboards and someone to call when the minister’s office wants an answer by 4 pm. The model that wins the institution may be less brilliant than the model that fits the institution’s paperwork.

That is why 2026 matters. The labs may still be racing on capability, but the institutional future is being settled through buying cycles. Agreements signed now will define which systems are normal by 2028. Data centres approved now will define where compute is cheap. Skilling programs funded now will define which vendor’s tools become the assumed form of AI literacy. Advisory structures created or abandoned now will define who gets to be in the room before the defaults harden.

The advisory question is not abstract. In the 2024 to 2025 Budget, the Australian Government funded a reshaped National AI Centre and an AI advisory body as part of a broader safe and responsible AI package. By late 2025, reporting based on Senate Estimates answers said the government would not proceed with the permanent advisory body, relying instead on existing mechanisms, targeted consultations and the Australian AI Safety Institute.

There may be defensible reasons for that decision. Standing bodies can become ornamental. Targeted consultation can be faster. Safety institutes can build technical depth. But the move still changes the room. A standing advisory body gives civil society, academia, industry and practitioners a visible, recurring place in the machinery. Targeted consultation gives government more discretion over who is asked, when, and on what terms. In AI policy, that is not process trivia. It is part of the settlement.

The National AI Plan, released in December 2025, sets out a broad Australian agenda: capture the opportunity, spread the benefits, and keep Australians safe. It links AI to infrastructure, domestic capability, investment, adoption, workforce training, public services, safety and regulation. The separate plan for the Australian Public Service says agencies will have Chief AI Officers, public servants will get training and guidance, AI use will be tracked and reported, and human decision-makers will remain accountable for key decisions made with AI assistance.

That is the correct terrain. It is also where words can become slippery. “Human decision-maker” sounds reassuring until you ask what kind of human, with how much time, authority and understanding. “AI assistance” sounds modest until the assistant drafts the options, ranks the evidence, frames the risks and prepares the record. “Accountability” sounds settled until the accountable person cannot explain how the system reached the recommendation because the procurement process treated explainability as a feature rather than a condition.

The Castlereagh Statement comes from a different register. It is an education statement, not a procurement instrument, shaped by educators, leaders and students across schools, VET, universities, government, industry and the arts. It argues that Australia is not short of expertise, but short of coordination and courage. Its centre of gravity is human formation: what education and training should become when information and cognitive production are no longer scarce in the old way.

The danger is that statements like Castlereagh get politely absorbed into plans while procurement quietly decides the operating reality. Educators ask what kind of person an AI-shaped education should form. Procurement asks which platform integrates with the learning management system. Both questions matter, but only one usually has a budget line attached. That is how human-centred language becomes platform-centred practice without anyone needing to betray it openly.

A university can say it values judgement, then buy systems that reward output speed. A school system can say AI should serve pedagogy, then procure tools that make pedagogy fit the tool. A government can say workers will be augmented, then fund workflows that remove the slow tasks through which junior staff became competent. A nation can say it wants sovereign AI capability, then train a generation inside foreign-owned platforms because the licences were available, secure and discounted.

Again, this is not an argument for purity. It is too late for purity, and purity was probably never the right aim. Public institutions need AI. So do universities, hospitals, councils, libraries, courts, small businesses and community services. The question is not whether they use it. The question is whether they buy it in ways that preserve the human capacities they still claim to value.

That question is hard because the benefits are real. AI can reduce backlogs, help staff draft clearer material, make services more accessible, support translation, improve search, assist research, strengthen cyber defence and give exhausted workers some time back. A procurement critique that ignores those benefits becomes theatre. People adopt these tools because the workday is already too full and the tool helps.

Convenience has political force. A technology that arrives as coercion can be resisted as coercion. A technology that arrives as relief borrows legitimacy from exhaustion. By the time the organisation notices that the relief has become dependence, the staff are already trained, the workflows already redesigned, the budget already adjusted and the old way already described as inefficient.

This is why contract language matters. If the contract says “human oversight,” define the oversight. If it says “audit,” say who audits, with what access, how often, and after what kind of incident. If it says “data may be used to improve services,” say whose services and whose data. If it says “transition out,” cost the transition as if the institution might actually need to leave. If it says “responsible AI,” make responsibility operational enough that someone can fail it.

The Productivity Commission should be able to disagree with this argument. That is a feature, not a flaw. It could say that whole-of-government procurement pools bargaining power, reduces duplication, improves security, lowers costs and helps the public sector adopt productivity-enhancing tools faster. Its 2025 interim work on data and digital technology explicitly treated AI productivity as something policy should enable, not merely fear.

Good. That is the debate worth having. Not AI as miracle or monster. AI as a set of institutional bargains with measurable benefits, hidden dependencies, distributional effects and exit costs. Someone should be able to ask whether the discounts outweigh the lock-in. Whether local compute access offsets foreign platform dependence. Whether training three million people in AI skills builds general capability or mainly expands a vendor ecosystem. Whether a voluntary ratepayer pledge is enough when the grid costs are real. Whether advisory mechanisms are broad enough before infrastructure decisions become irreversible.

This is where philosophy should be reading. Not only the model card, not only the benchmark, not only the alignment paper, but the procurement framework. The contract is where metaphysics becomes administration. It says what counts as action, who counts as the actor, what must be logged, when a human must appear, who can appeal, who carries liability and who pays when the system fails.

The old Singularity story asks us to imagine a machine crossing a threshold. The procurement story asks us to notice the thresholds already being crossed in smaller ways: a draft becomes the record, a recommendation becomes the default, a workflow becomes too expensive to reverse, an assistant becomes the way the institution remembers how to act. No one moment carries the whole drama. That is why the drama is easy to miss.

There is no need to imagine a machine seizing the state. The more plausible risk is that the state, the university, the hospital and the firm each buy a helper, build processes around the helper, remove the slack the helper seemed to create, and then discover that the helper is no longer optional. The machine does not need to conquer the institution if the institution purchases dependence and calls it modernisation.

The future will feel reasonable. Cheaper. Secure. Like keeping up. It will come with training, governance, dashboards and a reassuring sentence about humans remaining accountable. It will be approved by people doing their jobs.

By the time the old democratic question arrives, “Did we choose this?”, the answer will be difficult to locate. The choice will be spread across budget measures, MoUs, data-centre approvals, whole-of-government agreements, security settings, capped price increases, consultation papers, pilots, renewals and transition clauses nobody tested.

That paper trail is where the institutional Singularity is already taking shape.

Someone scoped it. Someone costed it. Someone accepted the risk.

Someone signed.